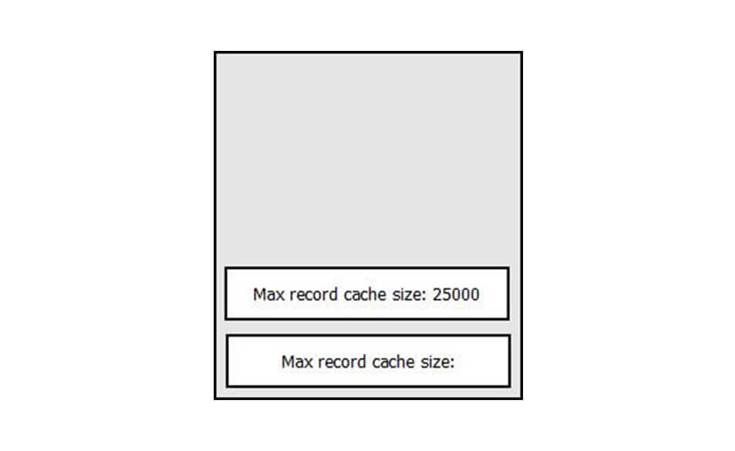

Today we use highly sophisticated and powerful object-oriented languages such as C# or Java to perform our daily programming routine. Even though those languages have its own garbage collection support, the following question remains: do we have to think about memory and what's actually happening under the hood? The answer is yes! Whenever we make an application which needs to work intensively with some data, for example an importer tool, or some service which needs to be alive for weeks or months, memory management is something which will definitely hit us hard very soon if we don't pay attention to it. For example, the most common line while logging the data looks like:

SearchLog.Write(_app, LogSeverity.Normal, SearchLogAction.Index, "Max record cache size: " + EntityDtoCache.RecordCacheSize);

Even though this line, as an isolated or single case, won't make a big problem, in combination with a few more similar lines or in some large loop it could. This line in memory produces two objects:

We can easily agree that this is not necessary. Concatenation of the strings is extremely dangerous in the loops. The performance of the tool can be highly degraded and even significant memory fragmentation can occur. Instead of this, for any kind of string concatenation we should use either string.Format or StringBuilder support. Have in mind that almost half of memory issues come from bad string handling.

But we won't go to details in explaining how StringBuilder or format function works. There are lots of blogs and documentation describing it. The primary focus of this blog will be to raise attention towards the issue why memory management is important and to provide some basic understanding how to make a memory efficient .NET application. I will briefly describe how memory management works in .NET.

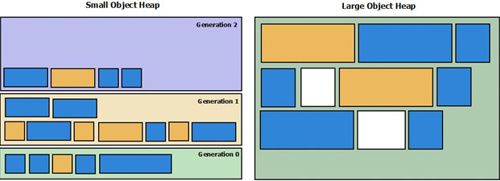

So, let's start. The entire memory of an application is divided in multiple segments rather than one big piece. There is a segment of memory used for large objects called Large Object Heap (LOH). All objects larger than 85 KB go there. Also, there is a part of memory used for smaller objects calledSmall Object Heap (SOH). SOH is further divided into three parts: Generation 0, Generation 1 and Generation 2. The newest objects are created in Generation 0 heap. When garbage collection is activated, the surviving objects from Generation 0 are promoted and moved to Generation 1, and surviving objects from Generation 1 are promoted to Generation 2. Therefore Generation 0 contains the youngest objects while Generation 2 holds the oldest objects. GC will always clean 'younger' generations when cleanup of some specific heap is required. For example, if Generation 1 cleanup occurs, Generation 0 cleanup will happen also and so on. Generation 2 cleanup is a bit specific because it will trigger cleanup of all younger generations and LOH too. The following picture illustrates memory divided into the abovementioned heaps.

Whenever SOH is cleaned up, Common Language Runtime (CLR) will decide whether it will compact the data in it too, to close all memory gaps made in the meantime. This is done in order to achieve more efficient memory use. Unfortunately, this doesn't occur with LOH. Because data in it is quite big, moving such data could be time consuming and it can impact the performances (In the image above, white squares in LOH present memory gaps, unused fragments). So, when some object from LOH is cleaned up, empty space is left in there. The next time when CLR has to store a new big object, it will first take a look if it could use some of the gaps for it. If not, the object is appended at the end of LOH, otherwise the gap or a part of it will be used. So, the important lesson from this part would be that large objects can cause memory fragmentation, so bear that in mind when you work intensively with such objects. CLR will expand the memory dedicated to the application multiple times if LOH becomes small, but in the end, when the limit is reached, you could get an out of memory exception. For more details how memory is organized and how cleanup works please refer to the following links:

- Gotchas: https://www.red-gate.com/products/dotnet-development/ants-memory-profiler/learning-memory-management/memory-management-gotchas

- Misconceptions: https://www.red-gate.com/products/dotnet-development/ants-memory-profiler/learning-memory-management/misconceptions

- Fundamentals: https://www.red-gate.com/products/dotnet-development/ants-memory-profiler/learning-memory-management/memory-management-fundamentals

- https://msdn.microsoft.com/en-us/library/ee787088%28v=vs.110%29.aspx

Ok, that would be enough for basic understanding how memory works. Let us say a few words about GC types. Yes, there are a few types of GC:) The basic and most commonly used GC type is workstation GC. This GC is used for client workstations, like a console, UI application or in general any standalone app. It is designed for applications which do not require intensive memory management. This GC will be activated from time to time in the background and it will do its job. For server applications, there is another type of GC which is more aggressive, server GC. This GC is enabled by default when you create an ASP.NET application, for example. It's designed to do a cleanup more often and to provide higher throughput and scalability for applications. To enable server garbage collection manually, you should put the gcServer xml element in your app configuration. Take a look at the following:

<configuration> <runtime> <gcServer enabled="true"/> </runtime></configuration>